50 Mental Models to Think Like a High-Level Generalist

50 disciplines. 50 big ideas. 50 mental models. 10 posts.

If you don’t think like a high-level generalist, you’re already paying for it.

In opportunity cost. In repeated mistakes. In blind spots that show up at the worst possible time.

Charlie Munger put it simply:

“The man who needs a new thinking tool but hasn’t yet acquired it is already paying for it.”

His fix is simple, demanding, and, most importantly, repeatable:

Learn the big ideas from the big disciplines.

Then reuse them everywhere: to decide, analyze, evaluate.

“I’m a big fan of knowing the big ideas in pretty much all the disciplines … and then using those routinely in your judgments. That’s just my system.”

So I decided to do the work: build my own Swiss Army Knife for thinking, in public.

To do that, I’m going to map the big ideas across the big disciplines, run them through a filter, then turn them into ready-to-use mental models.

Here’s how it’s going to work:

1 post a week for 10 weeks (on top of the usual weekly post).

Each post: 5 disciplines, 5 big ideas, 5 mental models.

And to go deeper: graded sources, from “I’m discovering” to “I want to suffer.”

I’ve been working on this project for weeks. It’s ready. And it starts now.

Seismology: Self-Organized Criticality

The Big Idea

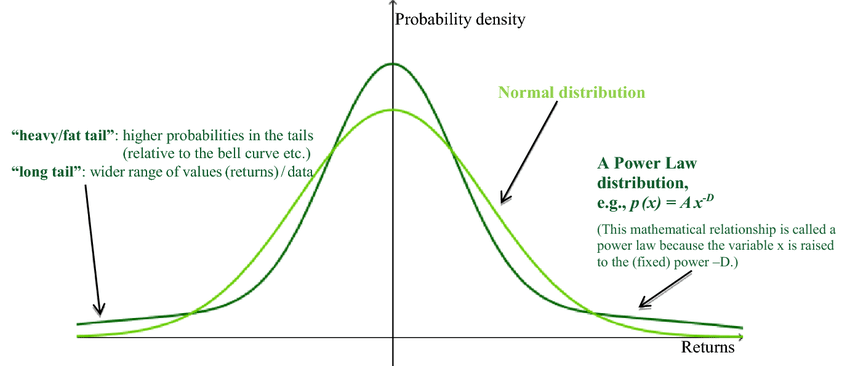

In seismology, the Gutenberg–Richter law says two main things:

The higher the magnitude of earthquakes, the lower their frequency.

But this decline in frequency doesn’t fall off “normally” (in the sense of a normal distribution).

Translation: truly extreme earthquakes are rare, but clearly not as rare as a normal distribution would allow.

Distributions with that property show up in systems with equally unusual characteristics:

They accumulate constraints slowly, but surely.

They release them suddenly, in jolts.

In seismology, those constraints are created by the continuous motion of lithospheric plates, which builds tectonic stress in the Earth’s crust. Slowly, but surely.

That’s self-organized criticality1.

More rigorously: it’s when a system has the critical point as an attractor, due to its intrinsic dynamics.

It continuously drives itself toward a near-critical state, one that’s just waiting to flip into a more-or-less extreme event, triggered by any of a wide range of sparks.

If that definition feels familiar, that’s normal. There’s a good chance you’ve seen a “documentary” about it:

Financial markets are textbook cases of self-organized criticality.

For years, the system stacks constraints: leverage, mispriced risk, “crowded” positions, hidden correlations, and last but not least, illusory liquidity.

Then comes the spark (sometimes tiny) that triggers a cascade of causes and consequences that makes history:

Drop → forced selling & margin calls → liquidity dries up → further drops → again and again.

This kind of logic is widely distributed in the universe. You find it in:

Avalanches: the more snow accumulates, the higher the odds that a few extra snowflakes are enough to trigger a break.

Revolutions / social contagion: a local event can serve as the trigger for a dynamic that was already under extreme tension. A “recent” example is the Arab Spring in 2010, sparked after Mohamed Bouazizi, a street vendor, set himself on fire in public in Tunisia.

Wildfires: “fuel” builds up for weeks (dried vegetation, low humidity, wind, etc.), then comes the spark (literally).

The Mental Model

Systems where extremes are structurally more likely correspond to fat-tailed distributions.

Being able to spot this kind of system completely changes risk management: the focus stops being “predict the trigger” (it can be too many things, at too many times), and shifts to quantifying fragility.

For that, here’s a 6-point checklist to diagnose this kind of system:

Constraint: is pressure building vs dissipating?

Feedback loops: does the system dampen shocks vs amplify them?

Dependence on stability: does it “work” only when everything is going well?

Coupling: does a break stay local vs spread as a cascade?

Margins / redundancy: is there slack when something breaks, or at least a fallback route?

Failure mode: is the breakdown gradual vs threshold-based?

This kind of analysis (deeper, I’ll admit) could have helped regimes avoid collapse, municipalities invest more in wildfire prevention, or funds (like Long-Term Capital Management2) avoid going under.

In practice: when fragility rises, the priority isn’t being right about the scenario. It’s reducing exposure to the cascade.

Because the only certainty is that it’s coming. Inevitably.

Artificial Life: Complexity and Pattern Can Emerge from Simple and Local Rules

The Big Idea

I’m going to do some proselytizing: this field is genuinely FASCINATING. If you’re even slightly curious about life, emergence, and where patterns come from, do yourself a favor and go down this rabbit hole. It’s almost a personal career regret… anyway.

Being a researcher in artificial life means building and analyzing computer simulations from a handful of rules, then watching how those structures evolve over time.

In its most rudimentary form, Conway’s Game of Life, you start with a grid of cells (on/off), and you add:

local rules

a live cell survives if it has 2 or 3 live neighbors.

a dead cell becomes live if it has exactly 3 live neighbors.

an initial state: the configuration at t = 0 (the starting distribution of cells)

Then you let it run.

And that’s where the magic happens (or rather reality):

From two or three ridiculously simple rules, you start seeing extraordinary configurations emerge3:

stable patterns,

structures that move,

“organisms” that seem to reproduce, feed, or interact.

Make no mistake: nobody coded those “behaviors.” They emerge.

And this is the basic case. You can take it much further:

move from a grid to continuous space,

add variables (color, energy, resources),

introduce randomness, selection, constraints, etc.,

and watch dynamics emerge that are infinitely richer, so rich that biological life starts to feel almost… banal.

Once you’ve gone deep enough, you won’t look at “simplicity”, or even “life”, the same way again.

The Mental Model

Complexity and patterns can emerge from simple, local rules.

Two central ideas fall out of that, mirror images of each other:

A starting situation with very simple rules can quickly produce complexity that’s hard to wrap your head around.

A situation that looks insanely complex and random can come from rules that are simple and deterministic.

In a field where the expected level of analysis often requires you to dissect situations that seem overwhelmingly complex (like finance and probably every discipline), it can be a lifesaver to remember that a few simple, decisive rules are usually hiding underneath that complexity.

Climatology: Think in Fields, Not Points

The Big Idea

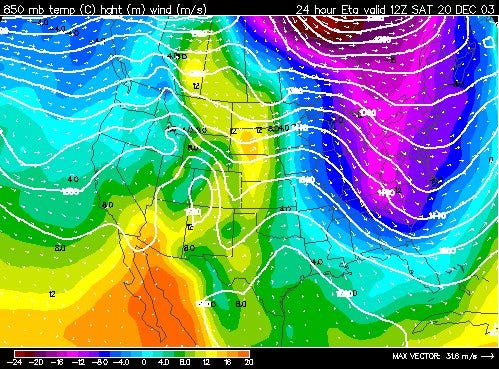

The concept of a field is central in meteorology.

Concretely, a field is a map where every point on the map is associated with a value, or several values.

Weather is “simply” the simultaneous evolution of multiple fields (pressure, temperature, geopotential height, etc.).

Thinking in “points” is like examining a single tree in the middle of a forest: you lose the overall structure, the gradients (the differences), and above all the dynamics that create the “whole.”

Thinking in “fields” is looking at the map instead of a few pixels: the overall shape, and how it deforms across space and time.

The Mental Model

The advantage of this big idea is that points are everywhere: prices, ratings, metrics, etc.

The two fundamental questions are:

What field is hiding behind these points?

What moves it and what makes it vary?

Reasoning in terms of fields is stepping up one level and focusing on the big trends (the fields, often invisible to our perception) that determine local variables (the points), the very things that saturate our attention and perceptions.

It also means accepting a bit less local precision as you move up a level of abstraction, in exchange for better global relevance.

Better to be vaguely right than precisely wrong.

Probabilities: Bayesian Thinking

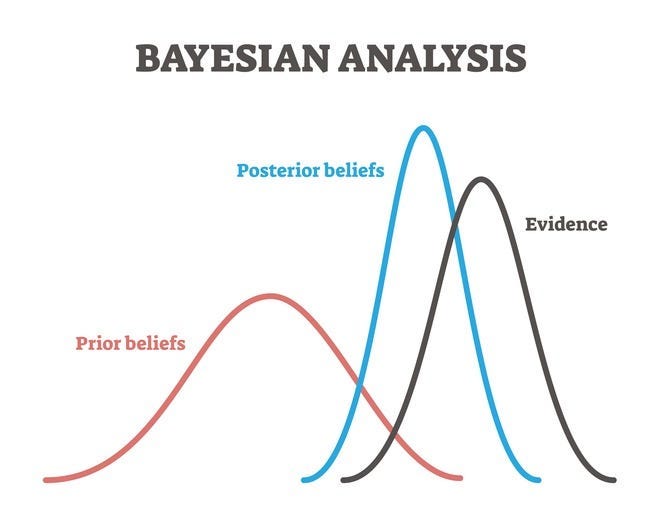

The Big Idea

The universe is too complex for our brains, in a computational sense. We don’t have the time, the horsepower, or the data.

So we do the only sensible thing: we replace all-or-nothing with degrees of confidence.

In an environment where information is uncertain and arrives continuously (so: our universe), Bayesian probability is the reference tool:

You start with one or more priors: what you already believe (or what you assume, for lack of anything better).

You estimate the probability of a claim based on those priors.

You update that probability with every new piece of information.

In a Bayesian frame, a “fact” is a belief attached to a slider between 0 and 1. Ideally, each of our beliefs about the world should be a set of sliders between 0 and 1, updated regularly.

If that feels too abstract and unusable in practice, understand this: it’s because of Bayesian probabilities that we can understand each other (among other things).

A baby doesn’t learn language because it has a grammar manual encoded in its genes. It learns like a Bayesian, through successive updates:

it watches mouth movements,

matches sounds to those patterns,

tries to reproduce them,

then adjusts its hypotheses based on feedback and the regularities it perceives.

In other words, its beliefs refine little by little, over months and years, as evidence accumulates, until language becomes coherent.

That doesn’t disappear in adulthood. Our unconscious is naturally Bayesian.4 In everyday language, that shows up as instinct and intuition.

Our conscious mind, on the other hand, is much less Bayesian, but we can train it!

The Mental Model

When you touch something as fundamental as information (that’s for a future post), the upside is that it transfers easily.

In practice, it’s just applying Bayesian principles:

Make your prior / hypothesis explicit, and your confidence level.

Make explicit what claim your belief is about.

Attach a number or a range (ideally) between 0 and 1 (watch out for false precision → 0.743).

Update as soon as new information arrives.

Improve your estimates from the feedback reality gives you (sometimes very brutally).

The more we apply these principles, the more rational we become, the less we’re affected by bias, and the more successful we are, in general. It’s probably the best way to raise our Rationality Quotient.

Systems Theory I: Recognize the Story, Regardless of the Set

The Big Idea

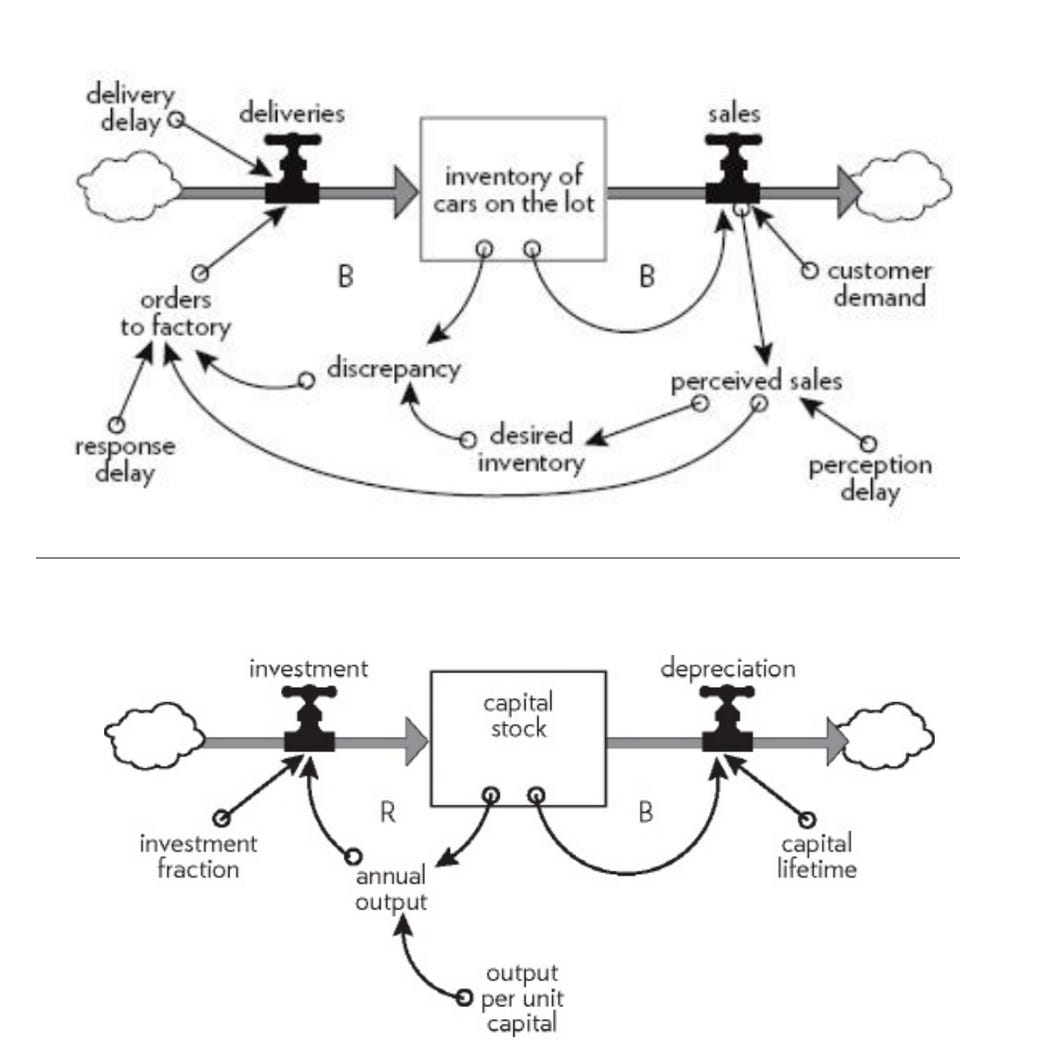

In short, systems are organized complexity. More formally, a system is a whole made up of:

elements

that interact with each other

with defined boundaries

inputs and outputs (information, energy, capital, etc.)

states (temperature, price, social status, etc.)

Here are two examples of systems:

No matter your domain, that definition should ring a bell: an organization, a technology, a product, a customer base, a portfolio, a family… these are all systems.

The real mental leap is to stop looking only at “things,” and start looking at the shape they share: interaction patterns, feedback loops, constraints, delays, flows.

That’s the famous “think outside the box.”

The earthquakes and financial crashes at the beginning of this post illustrate it perfectly. On the surface, they have nothing to do with each other. But if you look at them as systems, the common points jump out:

stress accumulation,

sudden release in bursts,

and therefore a much higher probability of extreme events than “normal” intuition suggests.

Same story. Different set.5

The Mental Model

By recognizing these patterns, you can anticipate the dynamics before experience has to teach you the same lesson again, just in a different setting.

Here the framework is:

Level up: describe the situation as a system by making its components explicit (focus on the story).

Transpose: reuse what you learned from system A to illuminate system B (even if they look unrelated).

Act based on that transposition.

The hardest part is obviously recognizing the patterns. But taking time to analyze a situation after the fact, again and again, is one of the best ways to build your personal library of patterns.

Some people call that wisdom.

And there we go: the first 5 mental models in our Swiss Army Knife. I already can’t wait to share the next 5.

As much as I genuinely enjoy working on this project, the main goal is still for these tools to reach as many people as they can actually help.

So if you think this post could be useful to someone else, share it.

This project is 100% free, and it will stay that way.

As for me, I’m going back to prepping the next one.

Take care.

Masters of Compounding

PART 2 is already out:

Clonal Selection

Overfitting

Thick Description

Overconsumption of Complexity

The Expectancy-Value Trick.

I did my best to make it as valuable for you to read as it was for me to write.

Sources

1. Seismology: Self-Organized Criticality

An introductory book that’s surprisingly complete despite having no math. There’s a certain poetry to it (and to seismology in general): Susan Elizabeth Hough — Earthshaking Science: What We Know (and Don’t Know) About Earthquakes

The foundational paper on self-organized criticality: Bak, P., Tang, C., & Wiesenfeld, K. (1987). Self-organized criticality: An explanation of 1/f noise. Physical Review Letters.

The first direct application of self-organized criticality to earthquakes: Bak, P., et al. (1989). Earthquakes as a self-organized critical phenomenon. Journal of Geophysical Research.

2. Artificial Life: Complexity and Pattern Can Emerge from Simple, Local Rules

Please, read this: Artificial Life: A Report from the Frontier Where Computers Meet Biology — Steven Levy.

ISAL (International Society for Artificial Life) provides a complete online bibliography covering the full range of topics studied in artificial life. Pick what interests you.

Probably my favorite paper on the topic, and one of my favorite papers in any field. A gem on the deep links between these computer simulations and biological life: Bartlett, S., & Wong, M. L. (2025). Lyfe: learning to learn better. Interface Focus, 15(6).

3. Climatology: Think in Fields, Not Points

A great introductory book (a bit long, but very digestible): C. Donald Ahrens — Meteorology Today: An Introduction to Weather, Climate, and the Environment.

For a deep dive into field-based reasoning in meteorology (you’ll have to hang on): Hersbach, H., Bell, B., Berrisford, P., Hirahara, S., Horányi, A., Muñoz‐Sabater, J., ... & Thépaut, J. N. (2020). The ERA5 global reanalysis. Quarterly Journal of the Royal Meteorological Society, 146(730), 1999–2049.

4. Probabilities: Bayesian Thinking

Probably the best source on Bayesianism without math: “Bayesian Epistemology” (Stanford Encyclopedia of Philosophy).

How the brain is built in a Bayesian way: Knill & Pouget (2004), Trends in Neurosciences.

For those curious about how a Bayesian framework can explain how infants acquire their native language: Romberg, A. R., & Saffran, J. R. (2010). Statistical learning and language acquisition. Wiley Interdisciplinary Reviews: Cognitive Science, 1(6), 906–914.

5. Systems Theory I: Recognize the Story, Regardless of the Set

The best introductory book on the topic, in my opinion. A bestseller I’d recommend to anyone, read it, then reread it: Donella H. Meadows — Thinking in Systems: A Primer

A massive tome on applying systems thinking to economics and business. Very long, very interesting, and absolutely worth it, but at times you really have to hang on: John D. Sterman — Business Dynamics: Systems Thinking and Modeling for a Complex World

DISCLAIMER:

I’m not a domain expert in every field I’m drawing from. I’m simply an investor with a lot of curiosity, and a lot of time to feed it. If you spot an empirical error or want to add nuance, I’d love your corrections, my goal is accuracy and usefulness.

If you’re an expert in any domain, WHATEVER IT IS, and you have a “big idea” that generalizes well, please don’t hesitate to suggest it. Drop it in the comments, DM me, or write a note and tag me, I’d genuinely love to include it. Of course, I’ll credit you in the post.

Self-organized criticality originally comes from statistical physics. But I’m introducing it through seismology for a few reasons:

In practice, it shows up more naturally in seismology than in most places you’ll first encounter it in physics.

Earthquakes make the intuition click faster than statistical mechanics ever will (in my opinion).

And yes, the concept was born from studying sandpiles, which is basically geology’s cousin… (I’m joking. Half-joking.)

The LTCM blow-up has been on my topic list since the day I started this Substack. Even though I’ve pushed it back more times than I’d like to admit, I’m going to cover it. One day.

These phenomena may actually be pretty ordinary, just like protozoan cells, mitochondria, jellyfish, plants, and basically any living organism.

What feels extraordinary probably comes more from the existence of an initial state (the singularity that gave rise to our universe) and from the rules of the game (our physical laws) than from the game’s evolution at any given time (me writing this footnote right now).

To be more rigorous, the “Bayesian brain” remains a model, and it hasn’t been fully proven.

I could formalize this as a bijection, but I’m keeping set theory for a later post.

Can’t wait for the next five! According to Bayesian analysis, there is a high probability I will like them!

Bayesian thinking is such a valuable skill to develop! Once you start thinking this way, you become much more resilient. It changes from "this didn't work" to "was this a tail event, or did I just terribly mis-estimate the probability? How can I update my mental model from this?"